Facilitating hybrid modelling in scientific computing

Cambridge University RSE Seminars

2026-02-26

Precursors

Slides and Materials

To access links or follow on your own device these slides can be found at:

jackatkinson.net/slides

Licensing

Except where otherwise noted, these presentation materials are licensed under the Creative Commons Attribution-NonCommercial 4.0 International (CC BY-NC 4.0) License.

Vectors and icons by SVG Repo under CC0(1.0) or FontAwesome under SIL OFL 1.1

Motivation

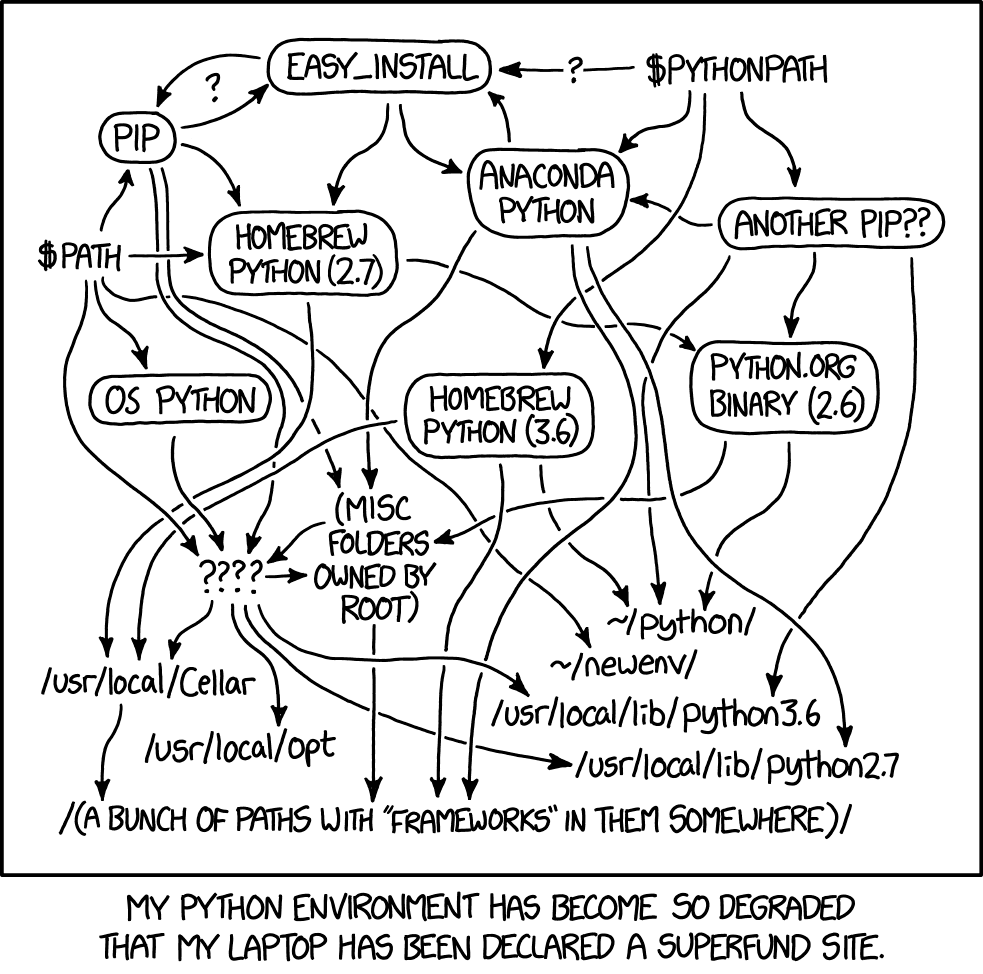

Weather and Climate Models

Large, complex, many-part systems.

Parameterisation

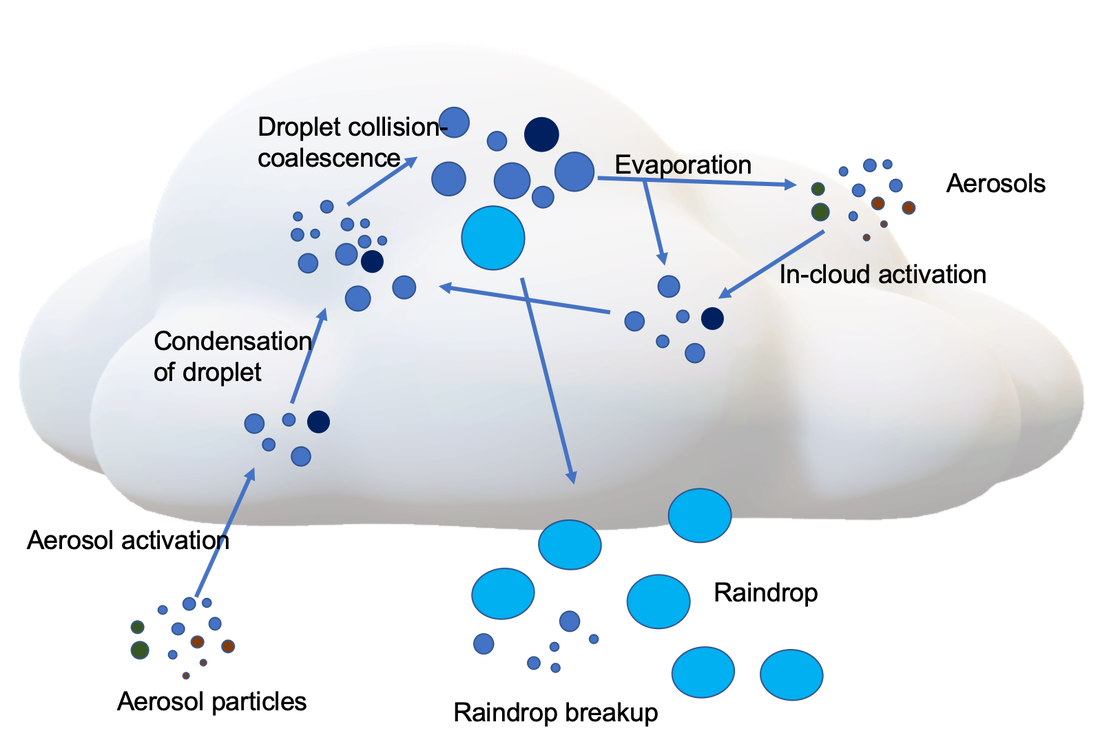

Subgrid processes are largest source of uncertainty

Microphysics by Sisi Chen Public Domain

Staggered grid by NOAA under Public Domain

Globe grid with box by Caltech under Fair use

Parameterisation

Subgrid processes are largest source of uncertainty

Microphysics by Sisi Chen Public Domain

Staggered grid by NOAA under Public Domain

Globe grid with box by Caltech under Fair use

Machine Learning in Science

We typically think of Deep Learning as an end-to-end process;

a black box with an input and an output1.

Who’s that Pokémon?

Who’s that Pokémon?

\[\begin{bmatrix}\vdots\\a_{23}\\a_{24}\\a_{25}\\a_{26}\\a_{27}\\\vdots\\\end{bmatrix}=\begin{bmatrix}\vdots\\0\\0\\1\\0\\0\\\vdots\\\end{bmatrix}\] It’s Pikachu!

Neural Net by 3Blue1Brown under fair dealing.

Pikachu © The Pokemon Company, used under fair dealing.

Hybrid Modelling

Neural Net by 3Blue1Brown under fair dealing.

Pikachu © The Pokemon Company, used under fair dealing.

Challenges

- Reproducibility

- Ensure net functions the same in-situ

- Re-usability

- Make ML parameterisations available to many models

- Facilitate easy re-training/adaptation

- Language Interoperation

Language interoperation

Many large scientific models are written in Fortran (or C, or C++).

Much machine learning is conducted in Python.

![]()

![]()

Mathematical Bridge by cmglee used under CC BY-SA 3.0

PyTorch, the PyTorch logo and any related marks are trademarks of The Linux Foundation.”

TensorFlow, the TensorFlow logo and any related marks are trademarks of Google Inc.

Possible solutions

Pure Fortran

- Implement a NN in Fortran

- Additional work, reproducibility issues, hard for complex architectures.

- Fortran ML frameworks

- Academic, limited functionality and support. Cannot leverage PyTorch features.

- e.g. Neural-Fortran and FIATS.

Language Interoperation

- Forpy

- Easy to add, harder to use with ML, GPL, unmaintained(?).

- SmartSim

- Python ‘control centre’ around Redis by Cray/HPE.

- Generic/versatile, learning curve, data copying.

- Fortran-Keras Bridge

- Keras only, abandonware(?).

- TorchFort

- Similar approach to FTorch, focussed on (and by) NVIDIA.

Efficiency

We consider 2 types:

Computational

Developer

In research both have an effect on ‘time-to-science’.

Especially when extensive research software support is unavailable.

FTorch

Approach

- PyTorch has a C++ backend and provides an API.

- Binding Fortran to C is straightforward1 from 2003 using

iso_c_binding.

We will:

- Save the PyTorch models in a portable Torchscript format

- to be run by

libtorchC++

- to be run by

- Provide a Fortran API

- wrapping the

libtorchC++ API - abstracting complex details from users

- wrapping the

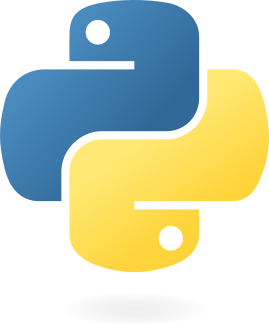

Approach

Python

env

Python

runtime

xkcd #1987 by Randall Munroe, used under CC BY-NC 2.5

Highlights - Developer

- Easy to clone, build, and install

- CMake, supported on Linux, macOS™, and Windows™

- Easy to link

Build using CMake,

or link via Make like NetCDF (instructions included)

FCFLAGS+=" $(pkg-config --cflags ftorch)" LDFLAGS+=" $(pkg-config --libs ftorch)"

Find it on :

Highlights - Developer

- User tools

pt2ts.pyaids users in saving PyTorch models to Torchscript

- Examples suite

- Take users through full process from trained net to Fortran inference for multiple applications

- Full API documentation online at

cambridge-iccs.github.io/FTorch

- FOSS

- licensed under MIT

- active contribution from users via GitHub

Find it on :

Highlights - Computation

- Use framework’s implementations directly

- feature and future support, and reproducible

- Make use of the Torch backends for GPU offload

- CUDA, HIP, MPS, and XPU enabled

- Indexing issues and associated reshape1 avoided with Torch strided accessor.

- No-copy access in memory (on CPU).

Find it on :

Highlights - Computation

- Indexing issues and associated reshape1 avoided with Torch strided accessor.

- No-copy access in memory (on CPU).

Find it on :

Some code

Model - Saving from Python

import torch

import torchvision

# Load pre-trained model and put in eval mode

model = torchvision.models.resnet18(weights="IMAGENET1K_V1")

model.eval()

# Create dummmy input

dummy_input = torch.ones(1, 3, 224, 224)

# Save to TorchScript

if trace:

ts_model = torch.jit.trace(model, dummy_input)

elif script:

ts_model = torch.jit.script(model)

frozen_model = torch.jit.freeze(ts_model)

frozen_model.save("/path/to/saved_model.pt")

TorchScript

- Statically typed subset of Python read by the Torch C++ API

- Intermediate representation/graph of NN, including weights and biases

Fortran

use ftorch

implicit none

real, dimension(5), target :: in_data, out_data ! Fortran data structures

type(torch_tensor), dimension(1) :: input_tensors, output_tensors ! Set up Torch data structures

type(torch_model) :: torch_net

in_data = ... ! Prepare data in Fortran

! Create Torch input/output tensors from the Fortran arrays

call torch_tensor_from_array(input_tensors(1), in_data, torch_kCPU)

call torch_tensor_from_array(output_tensors(1), out_data, torch_kCPU)

call torch_model_load(torch_net, 'path/to/saved/model.pt', torch_kCPU) ! Load ML model

call torch_model_forward(torch_net, input_tensors, output_tensors) ! Infer

call further_code(out_data) ! Use output data in Fortran immediately

! Cleanup (strictly not required as finalizers implemented)

call torch_delete(model)

call torch_delete(in_tensors)

call torch_delete(out_tensor)GPU Acceleration

Cast Tensors to GPU in Fortran:

! Load in from Torchscript

call torch_model_load(torch_net, 'path/to/saved/model.pt', torch_kCUDA, device_index=0)

! Cast Fortran data to Tensors

call torch_tensor_from_array(in_tensor(1), in_data, torch_kCUDA, device_index=0)

call torch_tensor_from_array(out_tensor(1), out_data, torch_kCPU)

! Inference and usage as usual

call torch_model_forward(torch_net, input_tensors, output_tensors)

call further_code(out_data)

FTorch supports NVIDIA CUDA, ARM HIP, Intel XPU, and AppleSilicon MPS hardwares.

Use of multiple devices supported.

Publication & tutorials

FTorch is published in JOSS!

Atkinson et al. (2025)

FTorch: a library for coupling PyTorch models to Fortran.

Journal of Open Source Software, 10(107), 7602,

DOI: 10.21105/joss.07602

Please cite if you use FTorch!

In addition to the comprehensive worked examples in the FTorch repository we provide an online workshop at /Cambridge-ICCS/FTorch-workshop.

Applications and Case Studies

MiMA - Uncertainty Quant.

- The origins of FTorch

- Emulation of existing parameterisation

- Coupled to an atmospheric model using

forpyin Espinosa et al. (2022)1 - Prohibitively slow and hard to implement

- Asked for a faster, user-friendly implementation that can be used in future studies.

- Follow up paper using FTorch: Uncertainty Quantification of a Machine Learning Subgrid-Scale Parameterization for Atmospheric Gravity Waves (Mansfield and Sheshadri 2024)

- “Identical” offline networks have very different behaviours when deployed online.

ICON - Multiple Parameterisations

Work led by Julien Savre at DLR

- Closely afifliated fork of ICON

- Multiple ML components in one model

- Common FTorch interface

- A focus on running on GPU

- Implemented without ICCS involvement

CESM coupling

- The Community Earth System Model

- Part of CMIP (Coupled Model Intercomparison Project)

- FTorch integrated into the build system (CIME) by Jack Atkinson, Will Chapman, and Jim Edwards

- Used by Will Chapman for model bias correction of

CESMthrough learning compared to ERA5 (Chapman and Berner 2025).![]()

FTorch has recently been selected as the interface for the ML-Enhanced CESM. Watch this space…

Derecho by NCAR

Turbulent Plasmas

- Work by UKAEA

- Surrogate models for turbulence in plasma gyrokinetics

- Turbulence significant during tokamak ramp-up

- Gaussian process models implemented in GPyTorch

- Coupled to JINTRAC code using FTorch

- 100x speedup over traditional code

- 2 weeks to 4 hours on 60 cores

STEP Tokamak Design by UK Industrial Fusion Solutions under CC BY 4.0

Others

See FTorch/community/case_studies for a full list.

- To replace a BiCGStab bottleneck in the

GloSea6Seasonal Forecasting model

(Park and Chung 2025). - Bias correction of

CESMthrough learning model biases compared to ERA5

(Chapman and Berner 2025) - Implementation of nonlinear interactions in the

WaveWatch IIImodel

(Ikuyajolu et al. 2025). - Stable embedding of a convection resolving parameterisation in

E3SM

(Hu et al. 2025).

- ClimSim Convection scheme in

ICONfor stable 20-year AMIP run

(Heuer et al. 2025) (preprint) - Review paper of hybrid modelling approaches

(Zheng et al. 2025) (preprint) - Implementation of a new convection trigger in the

CAMmodel.

Miller et al. In Preparation. - Embedding non-local ML schemes for gravity waves in the

CAMmodel.

ICCS & DataWave.

Online Training and Autograd

Work led by Joe Wallwork

What and Why?

To date FTorch has focussed on enabling researchers to run models developed and trained offline within Fortran codes.

However, it is clear (Mansfield and Sheshadri 2024) that more attention to online performance, and options with differentiable/hybrid models (e.g. Kochkov et al. 2024) is becoming important.

Pros:

- Avoids saving large volumes of training data.

- Avoids need to convert between Python and Fortran data formats.

- Possibility to expand loss function scope to include downstream model code.

Cons:

- Difficult to implement in most frameworks.

Expanded Loss function

Suppose we want to use a loss function involving downstream model code, e.g.,

\[J(\theta)=\int_\Omega(u-u_{ML}(\theta))^2\;\mathrm{d}x,\]

where \(u\) is the solution from the physical model and \(u_{ML}(\theta)\) is the solution from a hybrid model with some ML parameters \(\theta\).

Computing \(\mathrm{d}J/\mathrm{d}\theta\) requires differentiating Fortran code as well as ML code.

Implementing AD in FTorch

- Expose

autogradfunctionality from Torch.- e.g.,

requires_gradargument andbackwardmethods.

- e.g.,

- Overload mathematical operators (

=,+,-,*,/,**).

Using AD - FTorch

use ftorch

type(torch_tensor) :: a, b, Q, multiplier, divisor, dQda, dQdb

real, dimension(2), target :: a_arr, b_arr, Q_arr, dQda_arr, dQdb_arr

real, dimension(1), target :: multiplier_arr, divisor_arr

! Construct input tensors with requires_grad=.true.

a_arr = [2.0, 3.0]

b_arr = [6.0, 4.0]

call torch_tensor_from_array(a, a_arr, torch_kCPU, requires_grad=.true.)

call torch_tensor_from_array(b, b_arr, torch_kCPU, requires_grad=.true.)

! Workaround for scalar multiplication and division using 0D tensors

multiplier_arr = [3.0]

divisor_arr = [3.0]

call torch_tensor_from_array(multiplier, multiplier_arr, torch_kCPU)

call torch_tensor_from_array(divisor, divisor_arr, torch_kCPU)

! Compute some mathematical expression

call torch_tensor_from_array(Q, Q_arr, torch_kCPU)

Q = multiplier * (a**3 - b * b / divisor)

! Reverse mode

call torch_tensor_backward(Q)

call torch_tensor_from_array(dQda, dQda_arr, torch_kCPU)

call torch_tensor_from_array(dQdb, dQdb_arr, torch_kCPU)

call torch_tensor_get_gradient(a, dQda)

call torch_tensor_get_gradient(b, dQdb)

print *, dQda_arr

print *, dQdb_arrOptimizers and loss functions

- Optimizers

- Expose

torch::optim::SGD,torch::optim::AdamWetc., as well aszero_gradandstepmethods. - This already enables some cool AD applications in FTorch.

- Expose

- Loss functions

- We haven’t exposed any built-in loss functions yet.

- Implemented

torch_tensor_sumandtorch_tensor_mean, though.

Putting it together - running an optimiser in FTorch

\[\begin{bmatrix}f_1\\f_2\\f_3\\f_4\end{bmatrix}=\mathbf{f}(\mathbf{x};\mathbf{a})=\mathbf{a}\bullet\mathbf{x}\equiv\begin{bmatrix}a_1x_1\\a_2x_2\\a_3x_3\\a_4x_4\end{bmatrix}\] Starting from \(\mathbf{a}=\mathbf{x}:=\begin{bmatrix}1,1,1,1\end{bmatrix}^T\), optimise the \(\mathbf{a}\) vector such that \(\mathbf{f}(\mathbf{x};\mathbf{a})=\mathbf{b}:=\begin{bmatrix}1,2,3,4\end{bmatrix}^T\).

Loss function: \(\ell(\mathbf{a})=\overline{(\mathbf{f}(\mathbf{x};\mathbf{a})-\mathbf{b})^2}\).

Putting it together - running an optimiser in FTorch

In both cases we achieve \(\mathbf{f}(\mathbf{x};\mathbf{a})=\begin{bmatrix}1,2,3,4\end{bmatrix}^T\).

Case study - UKCA

- Chemistry model used in UKESM and Met Office UM

- 85-200 tracers with 300-700 interactions

- 25% of the UM runtime

- Solver is 40% of UKCA runtime (10% UM)

UKCA Flame Graph by Luke Abraham used with permission.

Case study - UKCA

- Time integration runs on column or slice chunks

- Start with \(\Delta t=3600\).

- If any grid-box fails, half the step and try again globally.

- Propose to train an ML model to predict step-size given inputs

- Requires large quantities of otherwise useless training data

- A nice ‘safe’ application of machine learning in modelling

FTorch: Summary

- Use of ML within traditional numerical models

- A growing area that presents challenges

- Language interoperation

- Exploring options for online training and AD

- Torch

autogradandoptimizerexposed usingiso_c_binding. - Work in progress on setting up online ML training.

- Torch

Thanks for Listening

Get in touch:

Thanks to Tom Meltzer, Mikolaj Kowalski,

Elliott Kasoar, and the rest of the FTorch team.

The ICCS received support from

FTorch has been supported by

References